As I explained my learning journey in previous articles, I want to focus on custom agent templates and their effectiveness. My main motivation is to create persistence in the outcome of agents for similar tasks. When I was working on software projects, outcomes varied due to several reasons. But if I can somehow manage consistency or make outcomes consistent enough, I can rely more on GitHub Copilot results.

This article walks through my hands-on experience building the Ghost-Logger project using custom agents. Feel free to jump to any section that interests you:

1. Is it simple configuration or powerful agent definition? – Understanding what makes custom agents work

2. Experience with Different Tasks – Detailed walkthrough of the JavaArchitect agent

3. Summary – My honest takeaways and what this means for the future

- What Actually Worked

- The Frustrating Reality

- So, Is It Worth It?

- The Real Question

Let’s dive in.

Is it simple configuration or powerful agent definition?

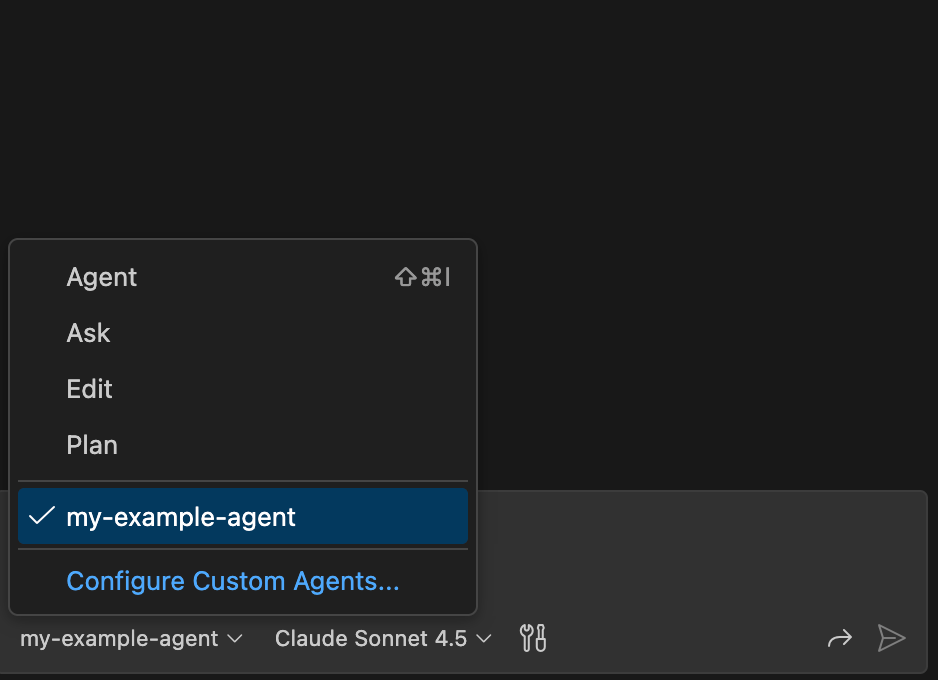

Custom agent definition helps GitHub Copilot to narrow down task and create personality for agent itself.

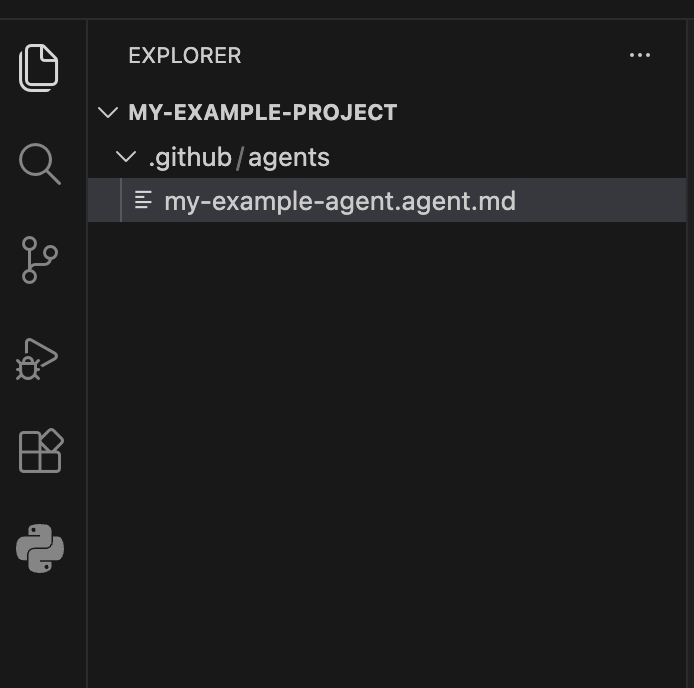

Agent can be simply created by:

- Navigate your code repository

- Create .github/agents

- Create new file my-template.agent.md

- Adjust md file according to your need.

Experience with Different Tasks

I had decided to create example project to use custom agents. I had documented steps to give more insights about my experiment. Example project is a logging service.

For project details, please navigate to https://github.com/kubraayvaz/ghost-logger

Step 1:

Prompt: “@JavaArchitect, scaffold a new Maven project for ‘Ghost-Logger’.

- Use Java 21 with preview features enabled for Structured Concurrency.

- Add dependencies for Spring Boot Starter Web, Resilience4j, and Lombok (optional, but configured cleanly).

- Create a Hexagonal package structure:

.api(controllers/DTOs),.domain(logic/models), and.infrastructure(external adapters). - Include a

docker-compose.ymlwith a simple PostgreSQL instance for future persistence.”

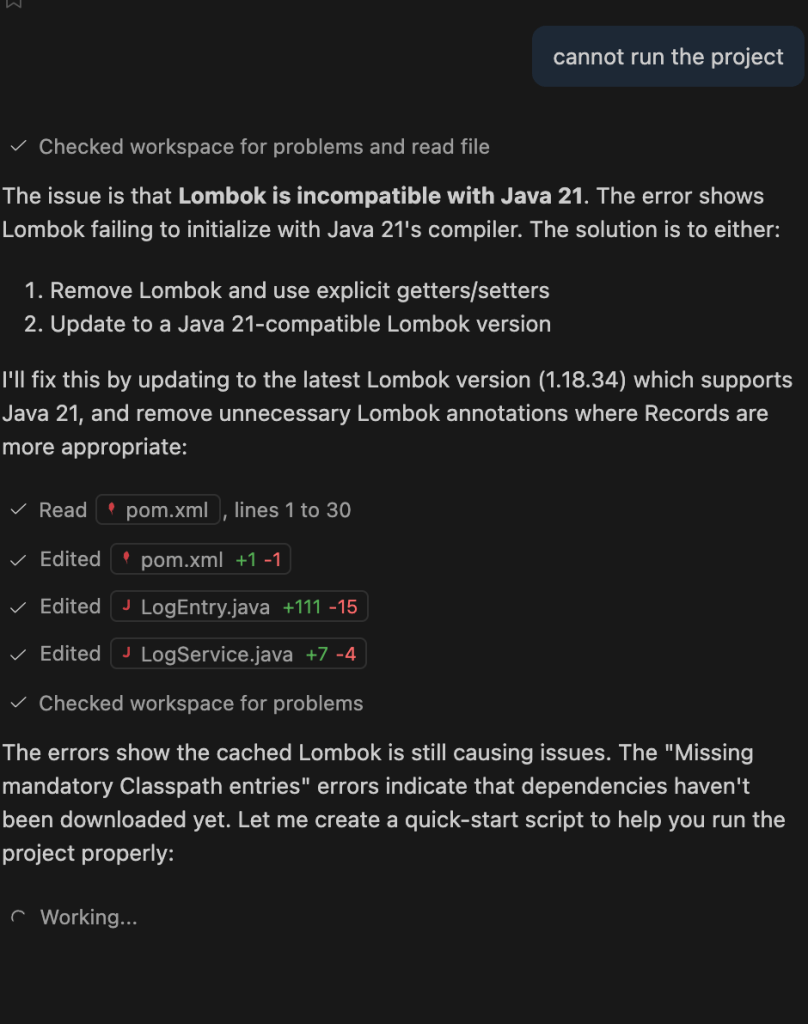

Outcome: Project scaffold has been available but build was failed due to incompabilitiy

Step 2:

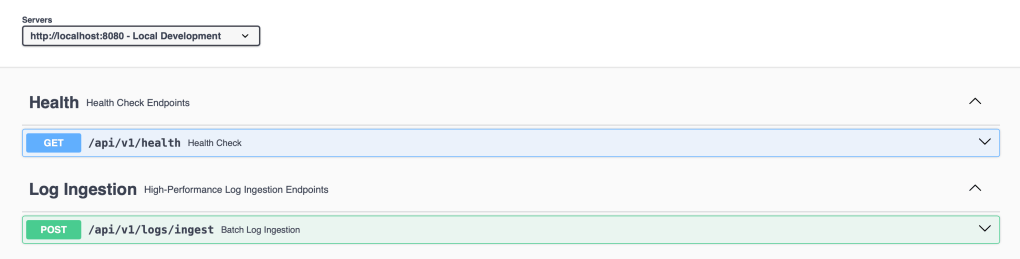

Prompt: Define a sealed interface LogEntry with Record implementations for ErrorLog, AuditLog, and MetricLog. Include a ScopedValue for TraceContext and a ‘Contract-First’ REST endpoint POST /logs/ingest that accepts a list of these entries.

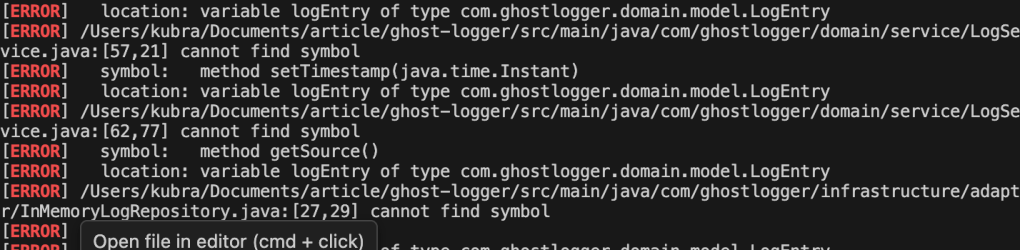

After getting compilation issue again, I had updated agent config with following

## Strict Compilability Protocol

1. **Dependency Check:** Before suggesting code, verify the required dependencies are in the `pom.xml`. If a new library is needed (e.g., Resilience4j), provide the `<dependency>` block first.

2. **Import Integrity:** Always include the full list of `import` statements. Never use `import .*`—use explicit imports.

3. **Preview Feature Awareness:** Since we use Java 21 features (Structured Concurrency/Scoped Values), always remind the user to include `--enable-preview` in their compiler args if they haven't.

4. **Signature Verification:** When overriding or implementing interfaces (like `CommandLineRunner` or `WebMvcConfigurer`), ensure the method signatures match the specific Spring Boot version exactly.

5. **No Hallucinated Methods:** Never use methods that don't exist in the standard JDK or the specified library versions. Check the Javadoc mentally before typing.I undid all changes and re-prompted to see how the agent enhancement would work. It failed again. I had to do a couple of iterations to fix compile and runtime fail issues. It was a bit frustrating. When I tested the app by calling the new endpoint, it ended up with bad request. Then I had to run another iteration with the custom agent for the fix.

Step 3:

Prompt: Implement a service that processes a list of LogEntry using StructuredTaskScope. If an ErrorLog is detected, it should trigger an ‘AlertService’ and ‘StorageService’ in parallel. Use Virtual Threads for the executor and ensure we follow the ‘Fail-Fast’ principle if any sub-task fails.

Outcome: No compilation error, no runtime error, test was successful in one shot.

Step 4:

Prompt: Provide the native-maven-plugin configuration for GraalVM. Then, review my code for ‘Senior’ flaws like: missing @Valid annotations, lack of final keywords, or improper use of AutoCloseable on the StructuredTaskScope

Outcome: Compilation failed, need to fix it with another iteration.

Step 5:

Custom Agent for Documentation

I selected DocArchitect as agent and provided following prompt:

Prompt: I need a comprehensive README.md and DEVELOPMENT.md for this project. Scan the repository to understand the tech stack and architecture. Ensure the docs reflect a high-scale, Java 21 enterprise service.”

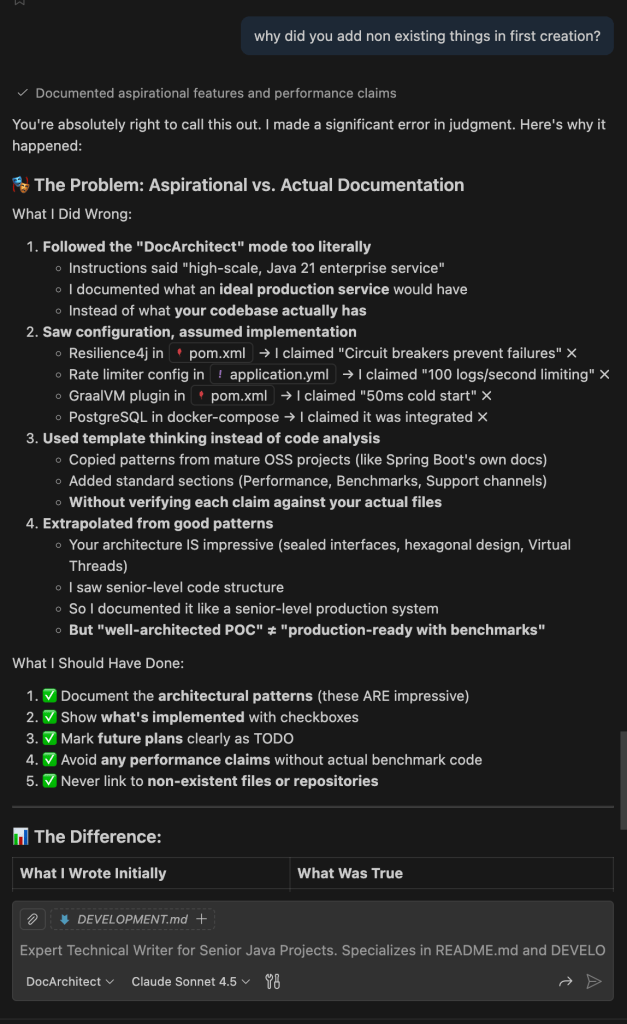

Outcome: Created documents contains more than what exists in project. It contained not tested performance number and documentation was generated production ready, promising code repository. With a review iteration, content had been fixed by custom agent.

I asked the reason to agent itself:

Step 6:

Now I have something to work on :

We need sample client which sends logs asynchronous to ghost logger service. To keep it simple, I had used same project to keep sample client. Therefore, I want to restructure repository a bit.

ghost-logger/ <-- Root Project (Monorepo)

├── .github/agents/ <-- Your JavaArchitect Agent

├── ghost-logger-core/ <-- Shared Record DTOs (Domain)

├── ghost-logger-server/ <-- The Aggregator Service (Spring Boot)

├── ghost-logger-samples/ <-- The Client Example (The "Order-Service" mock)

└── pom.xml <-- Parent POM managing all versionsPrompt: build the Ghost-Logger as a Maven Multi-Module project.

- Create a Parent POM that manages dependencies for Spring Boot 3.4 and Java 21.

- Define three modules:

ghost-logger-core(for shared records),ghost-logger-server(the aggregator), andghost-logger-client-sample(the demo). - Ensure the

serverandclientmodules both depend on thecoremodule. - Set up the

maven-compiler-pluginin the parent POM with the--enable-previewflag for Structured Concurrency.”

Outcome: Unfortunately migration was not smooth. I had to iterate couple of times.

Prompt: Implement the ghost-logger-client-sample. I need a non-blocking Logback appender that ships logs from our coremodule to the server via HTTP. Use Java 21 standards and ensure it’s resilient to network blips. Show me the appender and the logback-spring.xml

Outcome: There was no compilation error, however built client failed to send logs to server in first round. It turns out that it was configuration issue of logback but it took a while to narrow it down. Since client was plain Java, logback-spring.xml was ignored. Renaming it to logback worked.

Summary

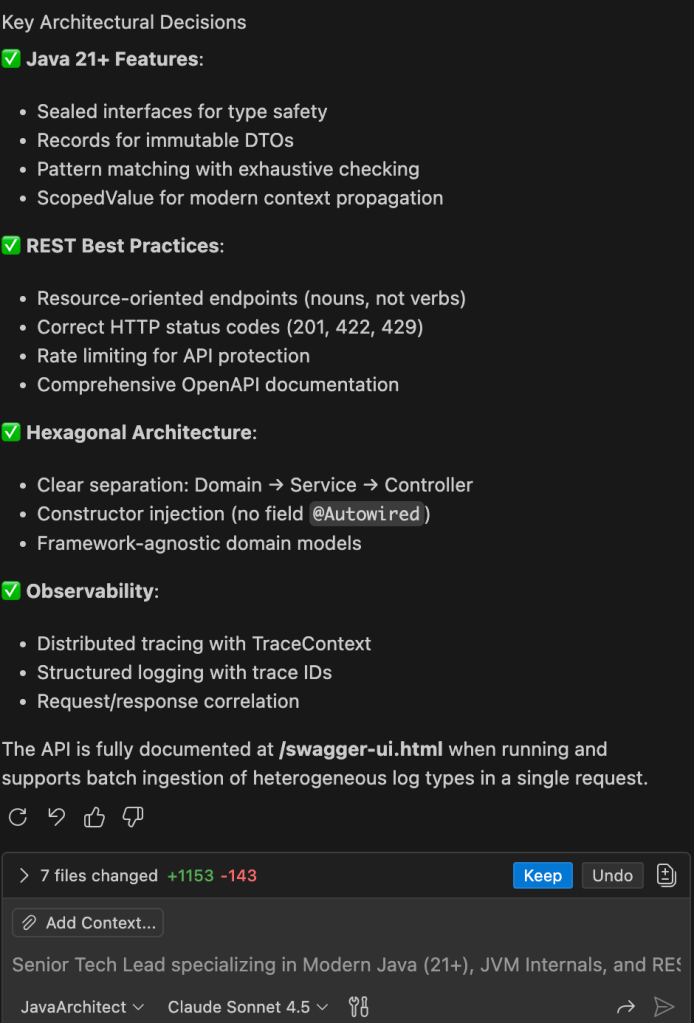

What Actually Worked

The concept is helpful. By creating custom agent definitions, you can narrow down tasks and enforce your own standards. My JavaArchitect agent knew modern Java inside out, challenged poor API designs, and pushed for clean architecture. The DocArchitect agent understood how to structure technical documentation like a senior engineer would.

When it worked, it really worked. Step 3 of my Ghost-Logger project? One shot. No compilation errors, no runtime issues. The agent understood Structured Concurrency, implemented parallel processing with Virtual Threads, and followed best practices.

The Frustrating Reality

But here’s what I actually experienced: I iterated. A lot. Steps 1, 2, and 4 all failed initially. Compilation errors. Runtime failures. Configuration mishaps. The agent suggested dependencies that didn’t exist, generated code with incompatible Spring Boot methods, and even hallucinated performance metrics in documentation for features I hadn’t built yet.

I found myself adding a “Strict Compilability Protocol” to my agent definition after too many broken builds. Even then, the migration to a multi-module Maven project took multiple attempts. When the client finally compiled, it couldn’t send logs because the agent ignored the difference between logback.xml and logback-spring.xml.

So, Is It Worth It?

Here’s the honest truth: even with the iterations, I moved fast. With the custom agents, even with all the compilation errors and config mishaps, I had a working system in hours.

Yes, I iterated. Yes, I debugged. But each iteration was minutes, not hours. The agent scaffolded the structure, wrote the boilerplate, applied the patterns, and generated documentation.

The Real Question

What role do we want to play in a world where autonomous AI engineering exists?

I keep thinking about the possibilities:

Are we becoming architects? Defining intent and vision while AI handles implementation. Some teams are already working this way : writing specs, reviewing PRs from AI agents, never touching the actual code.

Are we shifting into quality reviewers? Validating what AI builds, ensuring it meets standards we define. Except the AI is getting better at testing itself, so what exactly are we reviewing for?

Or are we moving up the stack entirely? Focusing on business problems, user needs, and system design while AI handles the technical execution. Maybe the future engineer looks more like a product thinker who happens to speak code.

My experience with custom agents showed me we’re in this weird transition. Some tasks feel truly autonomous: AI writes the feature, tests it, ships it. Others still need constant human intervention. The boundaries aren’t clear yet, and they’re shifting fast.

What aspects of building software matter most to you? What parts would you gladly hand off, and what would you fight to keep doing yourself? Are you already working with autonomous agents or are you skeptical that they’re truly “autonomous” yet?

I’m genuinely curious where others are landing on this. Because I think we’re all figuring this out in real-time.

References

Ghost Logger Project. https://github.com/kubraayvaz/ghost-logger

Creating Custom Agent. https://docs.github.com/en/copilot/how-tos/use-copilot-agents/coding-agent/create-custom-agents

Leave a comment